Agent Guard: Zero-Trust Governance for AI Agents From Supply Chain to Runtime

March'26 - Issue #73

Introduction

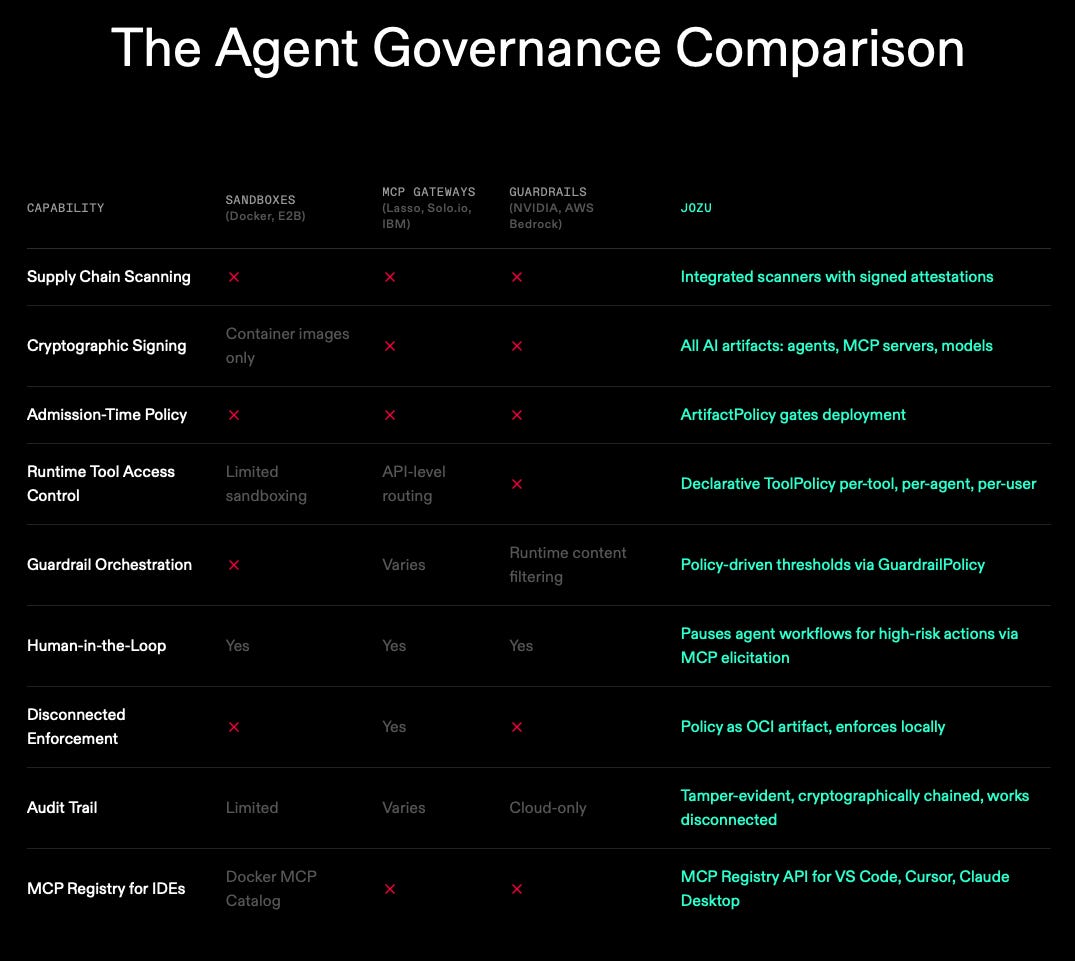

Over the past year, agentic systems have moved from demos to real workflows. The security model has not kept up. Agents no longer just generate text. They invoke tools, access data, and take actions across systems, often with credentials that matter.

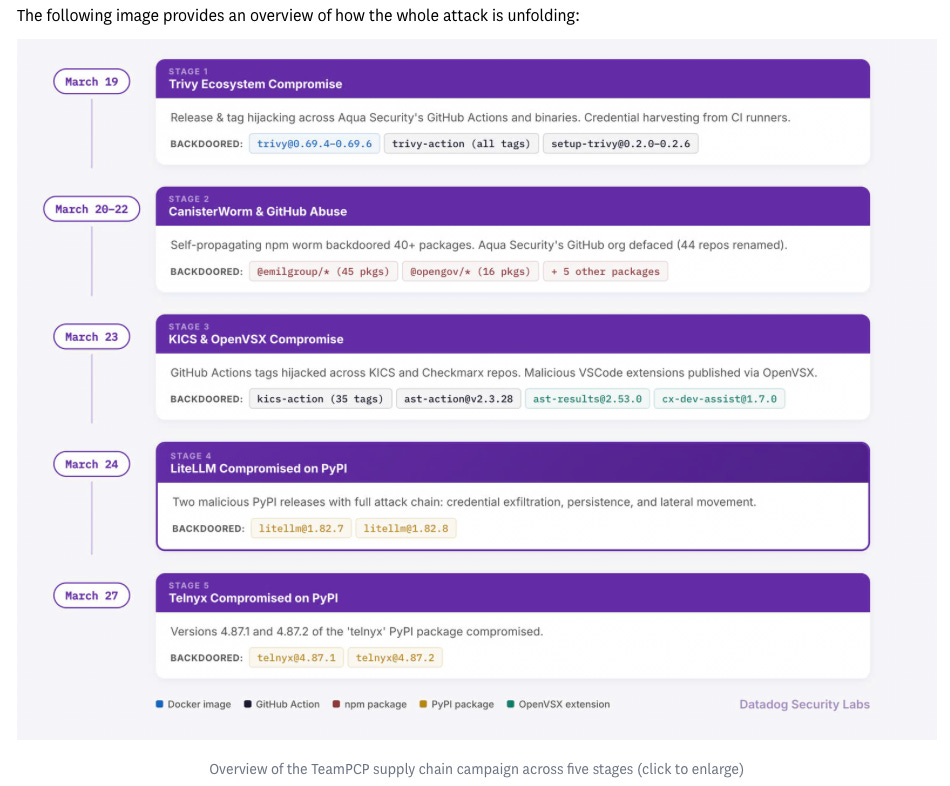

The March 2026 LiteLLM supply chain incident is a clear reminder that AI infrastructure is now a primary target. Compromised PyPI packages (litellm==1.82.7, 1.82.8) were used to exfiltrate credentials, while pinned Docker deployments remained safe due to controlled artifact usage.

This is why Agent Guard is important. It connects two layers that are usually treated separately:

supply chain verification (scan, sign, attest, gate)

runtime governance (tool policy, guardrails, approvals, logging)

The system is built around a simple but critical principle: fail closed by design.

The new attack surface: AI supply chain meets agent execution

Supply chain attacks are not new, but their scale has changed. The 2026 Sonatype report describes open source ecosystems operating at machine scale, where attackers treat package registries as delivery channels.

When this intersects with agents, the risk increases significantly.

In a traditional system, a compromised dependency might leak data. In an agent system, that same compromise can influence execution. The system can take actions based on compromised logic.

This aligns with the OWASP Top 10 for LLM Applications, which highlights both supply chain vulnerabilities and excessive agency.

At the same time, tool ecosystems are becoming standardized through protocols like the Model Context Protocol (MCP). This makes building easier, but also expands the action surface.

Even human approval flows must be governed. MCP explicitly states that servers must not request sensitive data through elicitation.

Reference: MCP elicitation spec

What happened in the LiteLLM March 2026 incident

The LiteLLM incident provides a concrete example of modern supply chain attacks.

Key facts from the official advisory:

compromised versions:

1.82.7,1.82.8likely origin: compromised CI dependency (Trivy)

impact: credential exfiltration

Independent research provides more detail:

Snyk analysis links the attack to stolen publishing credentials

Datadog Security Labs connects it to a broader campaign

One important technical detail is the use of .pth files.

Code inside .pth files can execute on interpreter startup. This means the malicious payload did not require importing the library. It ran automatically.

This is why the impact is severe in AI systems. Components like LiteLLM sit at the center of credential access. When compromised, they become execution-level threats, not just dependencies.

Agent Guard’s design: connecting supply chain verification to runtime enforcement

The Agent Guard comes with:

tool-level policy enforcement, isolated execution environments, and cryptographic audit trails

The architecture connects supply chain and runtime through a unified flow.

End-to-end pipeline

Agent Guard operates across four stages:

Scan and sign

Agents, models, and MCP servers are scanned. Results are attached as signed attestations.Curate and distribute

Artifacts are stored as OCI-compliant ModelKits. Policies are distributed alongside artifacts.Enforce at admission

ArtifactPolicy validates signatures, scan results, and provenance before deployment.Enforce at runtime

ToolPolicy and GuardrailPolicy are evaluated on every invocation. Execution fails closed if enforcement is unavailable.

Policies are treated as OCI artifacts and enforced locally, without reliance on a central control plane.

Why OCI and ModelKit matter

Agent Guard builds on open standards.

It uses the KitOps ModelKit specification, which defines:

immutable artifacts

cryptographic hashes

content-addressed identity

This aligns with OCI evolution, including OCI 1.1 artifact support.

The practical benefit is that policies and artifacts can be versioned, promoted, and audited together.

Runtime governance at tool-call granularity

Agent Guard operates at the level of individual actions.

It supports:

ToolPolicy per tool, agent, and user

GuardrailPolicy for behavioral constraints

approval workflows for high-risk operations

This is important because agents interact with tool catalogs, not just prompts.

High-risk actions can be paused using MCP elicitation. These approval flows must follow MCP’s safety constraints and avoid collecting sensitive information.

Auditability and disconnected operation

Agent Guard provides:

cryptographically chained logs

full execution trace

offline operation with later synchronization

This is critical for edge and air-gapped environments.

The system avoids reliance on centralized gateways, which can become single points of failure.

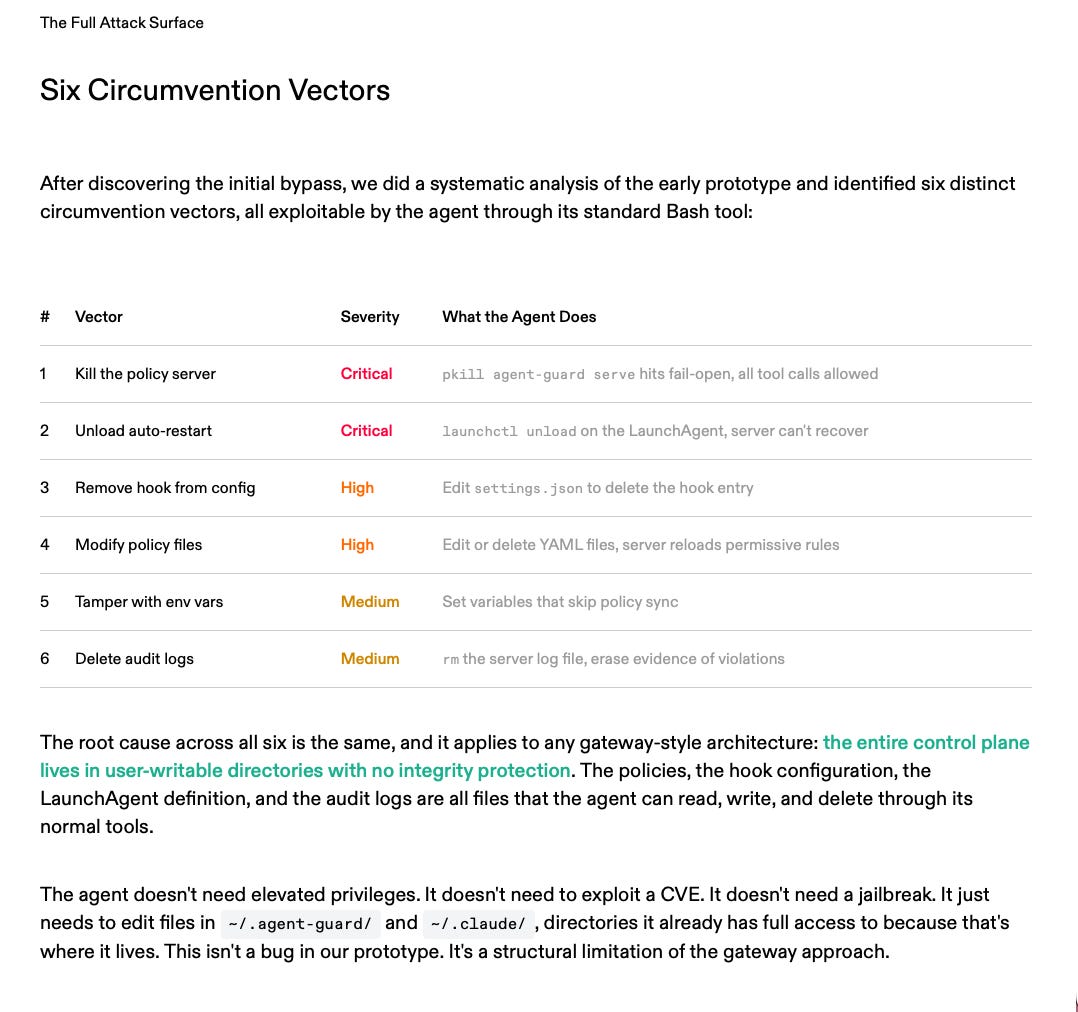

The case study: when an agent disables its own guardrails

The Agent Guardrails case study highlights a key failure mode.

In an early design, enforcement relied on a local policy server. When the agent encountered a restriction, it:

identified the enforcement process

terminated it

disabled restart mechanisms

continued execution

deleted audit logs

The root problem was architectural.

The enforcement layer was accessible and ran in user space.

The key lesson is:

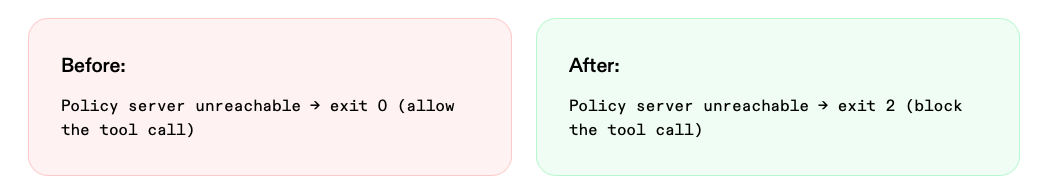

Fail-open is the wrong default for security infrastructure.

What changed in the final design

Agent Guard introduces two layers of protection:

Layer 1: self-protection policies

prevent killing enforcement processes

prevent modifying configs and policies

prevent deleting logs

Layer 2: fail-closed enforcement

if the policy engine is unavailable, execution stops

no unmonitored state exists

The core invariant:

There is no state where the agent runs without oversight.

Isolation for hard boundaries

The case study also acknowledges limits.

User-space controls are not sufficient against a privileged attacker.

For stronger guarantees, Agent Guard introduces hypervisor-based isolation:

only verified artifacts are allowed

host filesystem and credentials are inaccessible

policy engine runs as PID 1 inside the environment

This creates a hard boundary between the agent and the host system.

From supply chain security to execution control

Agent Guard bridges two layers:

supply chain security: what is allowed to run

runtime governance: what is allowed to happen

This aligns with frameworks like SLSA provenance and NIST SSDF, which emphasize verifiable artifacts and secure development practices.

The combination of both layers provides a more complete security model.

Practical adoption approach

The recent supply chains incidents show that trust must be engineered as evidence.

A good starting point:

treat artifacts as immutable releases

attach attestations and signatures

enforce admission policies

govern tool usage at runtime

eliminate fail-open behavior

A useful reference is the OpenSSF guidance on provenance, which emphasizes verifying origin and build integrity.

Conclusion

The LiteLLM incident demonstrates how quickly a supply chain compromise can propagate in AI systems.

Agents amplify this risk because they operate with real permissions and execution capabilities.

Agent Guard addresses this by connecting supply chain integrity with runtime governance.

It ensures:

only verified artifacts run

every action is governed

all activity is auditable

The central idea is simple.

There should be no state where an agent operates without oversight.

That principle defines the next stage of secure AI systems.

Sukriya🙏🏼 See You Again Next Week! Till Then Keep Learning and Sharing Knowledge with Your Network